Developing Safety Critical SoCs

By: Enrique Martinez – Functional Safety Manager at EnSilica

By: Enrique Martinez – Functional Safety Manager at EnSilica

A failure in a safety critical SoC can result in catastrophic consequences, therefore traditional hardware and software development methodologies require an upgrade to deal with the increasing complexity of today’s ICs while navigating the demanding landscape toward safety compliance.

A failure in a safety critical SoC can result in catastrophic consequences, therefore traditional hardware and software development methodologies require an upgrade to deal with the increasing complexity of today’s ICs while navigating the demanding landscape toward safety compliance.

In this article we outline an approach to functional safety that ensures SoCs have safety designed in from the start and that they comply with the wide variety of safety standards including: IEC 61508, ISO 26262, DO-167 and ISO 62304.

Safety engineering is typically associated with the automotive, aerospace, medical, industrial, nuclear power and rail industries. Today, these and many other industries make extensive use of electronic systems that contain complex SoCs (System-on-Chip) where digital, analog and RF processing, power management and a host of other functionality are integrated into a single silicon chip. Where an application includes critical safety functions, and a malfunction can lead to a high-risk of accident, comprehensive functional safety must be integrated and managed on-chip.

![]() A typical example of this high level of integration is in automotive industry, one of the fastest growing segments of the electronics industry. Emerging applications like ADAS (Advanced Driver-Assistance Systems) require a high degree of safety to be built in while also relying on a complex range of base technologies such as radar, high-precision positioning and wireless communications. The final solution often must target a very small space, a challenging operational environment, offer reduced power consumption and be delivered at low cost; all factors which result in the only competitive solution being to design solutions based on a custom SoC, ASIC or FPGA platform.

A typical example of this high level of integration is in automotive industry, one of the fastest growing segments of the electronics industry. Emerging applications like ADAS (Advanced Driver-Assistance Systems) require a high degree of safety to be built in while also relying on a complex range of base technologies such as radar, high-precision positioning and wireless communications. The final solution often must target a very small space, a challenging operational environment, offer reduced power consumption and be delivered at low cost; all factors which result in the only competitive solution being to design solutions based on a custom SoC, ASIC or FPGA platform.

Today’s industrial, aerospace, medical and IoT applications, even if with lower market volumes than automotive, are similarly required to combine new levels of complexity with high degrees of reliability and safety, and where an SoC design is the unique feasible solution.

The development of silicon chips containing millions of transistors and embedded software in a few square millimetres presents by itself a considerable challenge; this challenge is compounded when safety management is a factor. Meeting the demanding requirement for safety makes it necessary to coordinate many different skills and follow a very strict process in order to guarantee that the final product will meet the necessary safety level, in addition to its intended functionality. In summary, when a failure in such pieces of silicon could result in catastrophic consequences, traditional hardware and software development methodologies are often found to require an upgrade.

Different industrial norms (safety standards) have been published to address the design of safety-critical electronics, particularity IEC 61508 for industry, ISO-26262 for automotive systems, or others, like DO-178 and DO-254 for airborne systems and ISO-62304 for medical systems.

The IEC 61508 standard was originally developed as a multi-purpose safety norm which could be applied to automotive. However, automotive electronics has some particular characteristics, like high volume production and multi-supplier distributed developments, so it was convenient to reshape and upgrade IEC 61508 into the new ISO 26262 standard which was tailored for automotive. IEC 61508 remains a reference safety text in the non-automotive field, especially in industrial applications.

The ISO 26262 standard was originally published in 2011 and includes 10 parts dedicated to covering the different aspects of the product life cycle, with Part 5 dedicated to hardware development. However, since its publication there had been criticism that IC development was not properly covered. The ISO listened to these appeals and in 2018 a Part 11 was added that is dedicated to how IC design must be understood within the ISO 26262 context.

The ISO 26262 standard was originally published in 2011 and includes 10 parts dedicated to covering the different aspects of the product life cycle, with Part 5 dedicated to hardware development. However, since its publication there had been criticism that IC development was not properly covered. The ISO listened to these appeals and in 2018 a Part 11 was added that is dedicated to how IC design must be understood within the ISO 26262 context.

Another topic not covered in the original 2011 standard was the “safety of the intended function” (SOTIF). Again, the ISO has now covered this gap with a new complementary standard, the ISO/PAS 21448, published in 2019. This complements the ISO 26262 with topics like systems misuse due to human errors.

Therefore, after some years of learning and refinement, the automotive industry now has a comprehensive set of standards to tackle safety within electronics system, including SoCs. Automakers now require that their ECU tier-1 suppliers are ISO 26262 compliant for all safety relevant systems.

Developing safety critical ICs requires considerable additional effort in terms of project management, development, documentation and tools, when compared to non-safety critical consumer products.

An engineer faced with a safety critical design has two important considerations:

In the final design the engineer must show that the risk has been minimized to acceptable levels.

In the safety standards mentioned above, even with their different structures and styles, they share a common backbone:

SoC development require many activities involving different skills: system architecture, hardware and software partitioning and development, verification, and releasing the part for mass production. Each of these activities have specific implications in a safety-critical product.

In addition to the traditional simulation-based approach to hardware design (from RTL descriptions, or schematics), for safety critical systems it is necessary to evaluate how failing sub-blocks can contribute to violating a safety goal and propose the necessary remedies. This requires the impact of random-errors and soft-errors to be quantified in the different chip structures, which is a challenging task in million+ gates devices.

Equally, software engineering is not an easy task in safety critical systems. Some infamous car accidents, massive recalls, and even fatal plane crashes have been attributed to software bugs. When a software image is going to be embedded into a SoC ROM, the task becomes especially delicate. A robust process for specifying, coding, documenting, and verifying software is essential in order to guarantee the product compliance to safety norms. The recommended software coding methods can introduce some restrictions with certain languages, like MISRA-C; some quality metrics are often required, like code or condition coverage; or the need to test every software unit individually against its requirements. All these steps are necessary for ISO 26262 or IEC 61508 compliance.

Safety also has an impact on the way organisations need to operate. The basic principle: for any work product, the verifier must be independent from the author, is described in different forms in the various safety standards, requesting different independence levels and confirmation reviews, depending on the required safety level under consideration.

Finally, how do you guarantee to a customer or project partner that your SoC is safe? Safety standards require that projects undergo an independent assessment, including specific variants depending on the safety level. An independent auditor will verify the safety case: the collection of documents and work products that prove that a project has been properly executed according to the relevant standard.

EnSilica adhere to international quality standards with an ISO 9001:2015 certificate that guarantees the all the business activities are performed in line with rigorous processes. However, the compliance to safety standards like ISO 26262 or IEC 61508, needs something more.

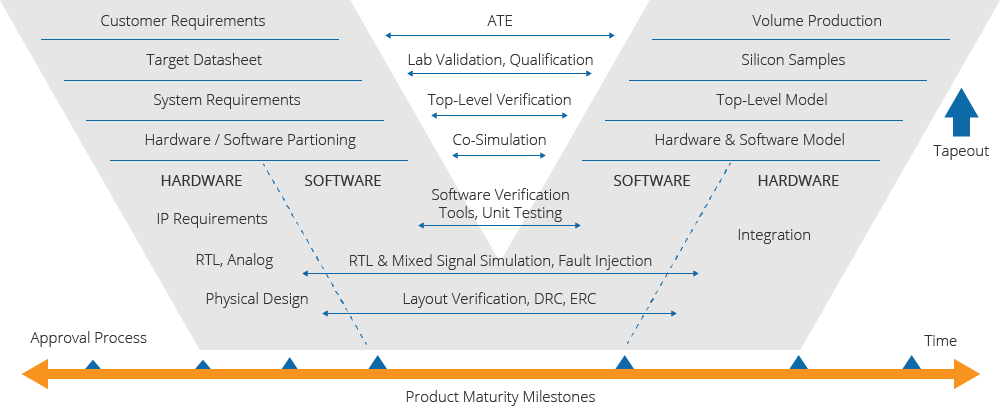

For the whole product lifecycle, EnSilica use a V-model based process for developing safety critical SoCs, from product conception to final volume production. This includes robust processes for documentation management, configuration management and traceability. Strict hardware and software verification processes are in place, with a consolidated approval flow assigning defined milestones for the different product maturity stages. Figure 1 shows an overall picture of the process.

A top-down approach is followed regarding the architecture definition and the propagation of requirements to lower levels, especially those involving in safety. For every hierarchical level, appropriate test cases verify the required functionality, with a final sign-off process performed prior to tape-out.

Figure 1. The EnSilica development and verification process

EnSilica follow a rigorous EDA tools selection process for all the development and verification activities. Only first-class design tools that have passed the validation process required by the applicable safety standard are considered, covering areas such as; logic synthesis, simulation, physical implementation and STA verification.

Every safety-critical project needs a safety analysis; this is an essential step. This is an important difference compared with the traditional design approach. Design expertise plays a crucial role in this analysis to have the in-depth know-how and understanding of what can go wrong in a circuit, IP block, or software module, and putting in place the necessary mitigation strategies. Figure 2 describes this workflow, often ending with an independent third-party confirmation, as required by the safety standard.

Figure 2: The safety analysis process

The task of verifying that the implemented safety measures are effective introduces an additional step and one which is a requirement of the standard, and in the case of complex SoCs, the only feasible way to do this task is through dedicated fault injection simulation. These software tools allow us to simulate common faults occurring in a silicon die, e.g. random faults, soft errors, so that we can verify that they are properly detected or corrected, leading to safe system behavior.

SoCs may include processing IPs, like MCUs or DSPs, whose behaviour often relies on a software image coded inside the silicon die, for example in form of a ROM or OTP memory. Debugging embedded software has always been a challenging activity, and when the code image is inside a piece of silicon, the challenge is even greater, making traditional debugging tools unusable. For SoC development, EnSilica use hardware-software co-simulation models, which enable the whole system behaviour to be observed, even before any real silicon is available.

Another key aspect in developing safety critical systems is the selection of suppliers. EnSilica work only with silicon fabs and other subcontractors with demonstrated capability to manufacture parts according to the relevant safety standards. All the communication flow with suppliers and customers undergo strict rules, including safety plans and development interface agreements (DIA) where necessary.

The use of third-party IPs (processors, ADCs, etc) which require very specific design know-how, which is often only in the supplier’s hands, requires special attention. The integration of such IP blocks in a safety application requires that such IP development has undergone a process equivalent to the one requested by the FuSa target project. This requires a range of evidence to be provided, including a documentation exchange between EnSilica and its suppliers/partners relating to safety, the so-called “safety package”, or perhaps a collection of documents, that guarantee the validity of the IP under consideration. This process is shown in the Figure 3.

For embedded processing IPs, Arm is one of the EnSilica preferred partners, with a great offer in FuSa compliant cores and support tools.

Figure 3: IP documents exchange chain

Regarding the internal organization, EnSilica maintain a project independent FuSa management structure, ensuring the necessary independence for establishing FuSa processes and providing the necessary training and support to all the engineers involved in safety critical products.

EnSilica offer OEMs, the capability of developing SoC based solutions for their safety critical electronics, based on a leading-edge combination of robust FuSa compliant project management processes, first class suppliers and tools, and a outstanding team with all the necessary skills and experience to tackle the most ambitious goals in silicon technology.

If you are looking for an SoC development and supply partner with the right expertise and experience to bring your next safety-critical product to market quickly and efficiently then please review our custom ASIC design and supply services, or our range of full-flow SoC design services. Alternatively get in touch for an initial discussion or to request a Design Consultation.

The Economics of ASICs: At What Point Does a Custom SoC Become Viable?

The Economics of ASICs: At What Point Does a Custom SoC Become Viable?